Member stories

Driving digital transformation: how Adult Learning Wales used our digital elevation tool

Using our digital elevation tool, Adult Learning Wales engaged managers, tailored assessments and continues to advance digital transformation across its organisation.

Driving technology transformation at The College Merthyr Tydfil using Jisc’s digital elevation tool

Our digital elevation tool is helping accelerate digital transformation, strengthen cyber security, enhance teaching and learning, and secure funding, while empowering staff and improving the student experience.

Supporting student success with learning analytics at Abertay University

Learning analytics and attendance monitoring have become an established part of timely and proactive engagement for Abertay students at risk of leaving.

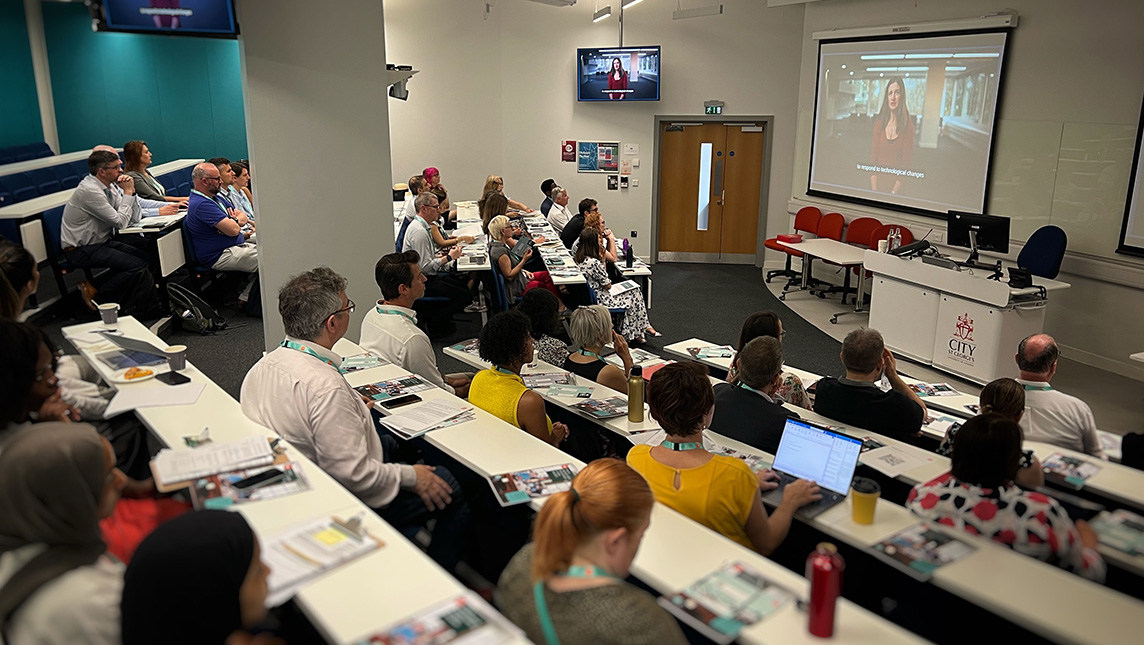

From two universities to one digital culture: City St George’s transformation journey

City St George's embarked on a people-centred approach to digital transformation that prioritised building digital culture and engagement alongside foundational technology projects.

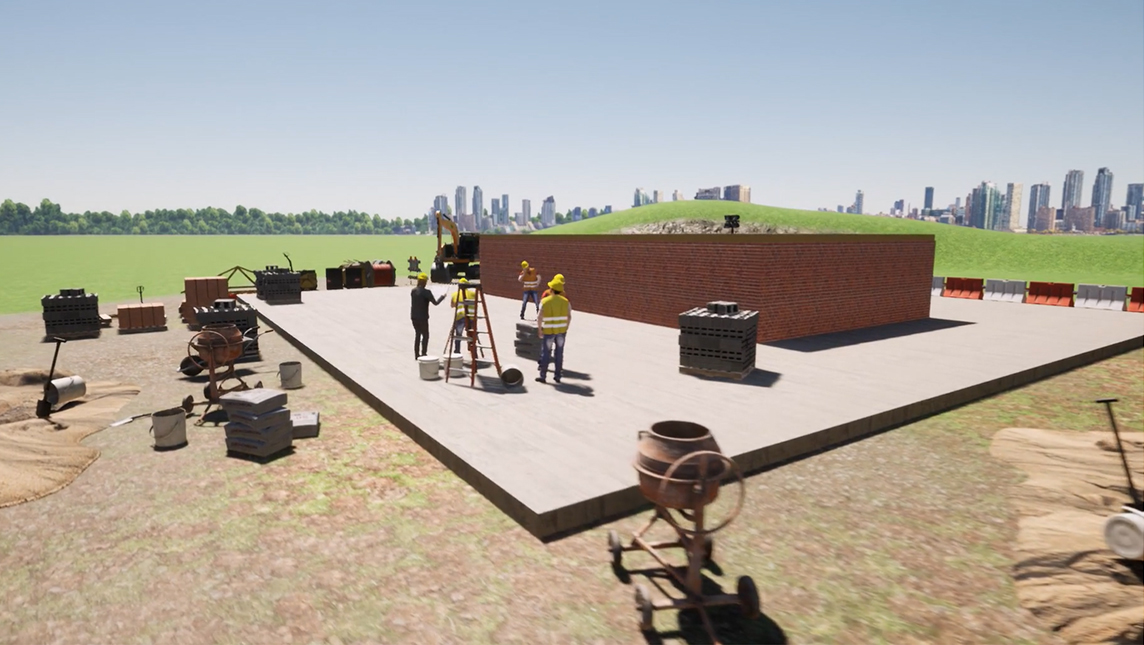

Building skills in virtual reality: how NCG is preparing students for the world of work

Virtual reality has opened up new ways for students at NCG to build employability skills, offering safe, immersive experiences in industries such as construction and nursing without leaving campus.

How City College Plymouth achieved award-winning productivity gains

Google’s standard workspace tools helped City College streamline their teaching observation process, save a thousand hours a year, and win an AoC Beacon Award.

Co-designing global approaches to digital transformation

An ALT-Jisc award-winning project from Liverpool John Moores University and Phu Xuan University in Vietnam has fostered new digital approaches and strengthened bonds across different continents.

Purpose, people and process: the University of Chester's recipe for co-designed digital transformation

The University of Chester discovered that successful digital transformation is about much more than the technology - it's about purpose, collaboration and really listening to people's wild dreams.

Scaling digital heights: Bath Spa's transformation journey

How Bath Spa’s collaborative working ethos has informed its approach to digital transformation.

Open access and academic equity: the journey to publication with the University of Manchester

Dr Ellie Gore's recent publication with the University of Manchester underscores the impact of open access publishing on the academic community and beyond.

Making generative AI work for learners and staff at Nottingham College

Philippa Armstrong, learning technology coach at Nottingham College, discussed their approach to generative AI live at Digifest 2025 for our Beyond the Technology podcast.

Go try, fail fast, try again: how Queen’s University Belfast is embracing AI

Queen’s University Belfast is taking a positive, proactive approach to AI – and using Jisc’s digital transformation toolkit has helped bring the whole community on board with its AI strategy.

Two strands, one goal: advancing open research and data management

Our digital preservation consultancy provided crucial guidance when Liverpool John Moores University embarked on a project to rethink open research and research data management.

Digital learning and dynamic spaces: How e-books are transforming the student experience at Itchen College

When Adrian Waters stepped into the role of head of student support at Itchen College over two years ago, he joined a team committed to reshaping how students accessed learning resources.

Start with people: Sheffield Hallam University’s digital learning transformation project

Giving staff and students the digital skills and capabilities they need has been at the centre of Sheffield Hallam’s digital learning transformation project.

Global access and seamless support: Digital assessment at Vienna University of Economics and Business

Vienna University of Economics and Business (WU Vienna) has enhanced its accessibility for international students taking assessments while ensuring academic integrity and cost savings.

Digital preservation – more than just storage

When the University of Aberdeen started to look at information management and consider how best to manage its collections, Jisc’s digital preservation consultancy provided essential support.

Discovery to delivery: the University of Suffolk’s data quality journey with attendance monitoring

Data quality was a vital requirement when implementing attendance monitoring at the University of Suffolk and along the way they uncovered a wealth of benefits for their students and staff.

A bot named Winnie

Like many others across the sector, Windsor Forest Colleges Group hit a crunch point where it became clear that the use of artificial intelligence (AI) was inevitable.

Digital transformation at a global university

Heriot-Watt is a distinctive university: Scottish in its heritage and global in its footprint. So how does digital transformation work across such a geographically scattered institution?

By design and delivery: embedding flexible learning at the University of Manchester

The University of Manchester has committed to delivering blended learning by default for all on-campus students with its innovative flexible learning programme.

People, place and partnership at Ulster University

How one of the most complex universities in the UK is transforming digitally to make technology work harder at bringing people together.

How an AI ‘study buddy’ is providing support for the University of London’s students

The university successfully piloted an intuitive AI study buddy to provide support for distance learning students

University of Hertfordshire's new route to procurement

After discovering their institutional repository couldn’t keep up with evolving needs, the team at the University of Hertfordshire explored a new route to procurement.

Unlocking the future with Remote Labs

The University of Edinburgh has been using Remote Labs to revolutionise the way students access engineering and promote curriculum change.

Navigating generative AI with Copilot at South Staffordshire College

18 months into their journey with generative AI, the benefits of Microsoft Copilot are being felt in the classroom and beyond at South Staffordshire College.

Activate Learning's mission to redefine remote learning

With their innovative learning philosophy flipping the traditional paradigm on its head, Activate Learning developed an award-winning online programme to empower adult learners.

Bridging the connectivity gap in rural clinical settings in Powys

Cardiff University and Powys Teaching Health Board had been searching for a solution to give students a fully supported, rich experience of life off campus. A new device, extending eduroam, proved to be the solution.

From scoping to implementing: insights and guidance from Newcastle’s learning analytics journey

The learning analytics project team from Newcastle University share their experience implementing data explorer and study goal.

A RAAC race against time: connecting a sixth form at speed

Imagine finding out, just days before your sixth form students are due to start their courses in September, that the school building is unsafe for use and there is nowhere for them to learn…

Scrums and squads: how Exeter’s agile culture is paying off for digital transformation

Scrum master, squads, fortnightly sprints, backend developers… These might not be everyday terms in most UK universities but they’re behind the approach powering digital transformation at the University of Exeter – and it’s working.

Using the power of collaboration to demystify open monographs

Elaine Sykes, head of open research at Lancaster University, explains how the last twelve months have seen their library team work tirelessly to bring open monographs to the forefront of planning.

Forensic frontiers: revolutionising crime scene analysis with VR technology

Imagine sitting in a boardroom, putting on a virtual reality (VR) headset, and you’re instantly transported to a 3D view of the crime scene hundreds of miles away. Sounds like science fiction?

Building digital capability in the land down under

The University of Wollongong (UOW) is empowering its students on a self-guided journey to take their digital capabilities to the next level and make them stand out in the job market.

Curiosity and culture: blending digital and physical at the University of Northampton

When the university's new town centre campus opened, it was an unmissable opportunity to integrate digital innovation into the physical design of the campus and the student offer. But the success of such an extensive change programme depends on a third element: culture.

How UCL is redesigning assessment for the AI age

UCL is reimagining assessment and feedback in response to the rise of generative AI and the opportunities and challenges it brings.

Greenwich’s five steps to digital strategy success

The University of Greenwich has successfully developed a culture of digital transformation that runs right through the university. Find out their five steps to success.

Simmersive Staffordshire: bringing together people, place and pedagogy for practising clinical skills

Imagine you’re a student nurse about to put a needle into a patient’s vein: your first ever intravenous cannula. How would you introduce yourself to the patient, allay their nerves, explain what you’re about to do, prepare the equipment and then perform the physical procedure?

Coleg Y Cymoedd: Cyber security assessment

Focused on improving their cyber security posture, the IT team at Coleg Y Cymoedd, together with Jisc, completed a cyber security assessment. By evaluating the effectiveness of their current measures, the process enabled them to identify what steps they could take to strengthen their security further.

A Teesside University and Jisc collaboration is at the heart of a digital learning toolkit

When Teesside University embarked upon a new piece of work to create a framework for digital learning, the team didn’t quite envision the scale of the project.

Blended learning with a twist at the Heart of Worcestershire College

Blended learning is nothing new for the team at the Heart of Worcestershire College. They’ve been teaching in this way for nearly a decade. But three years ago, they set about running a major project that would span the entire institution and fundamentally change how subjects were taught.

Cardiff University’s multi-disciplinary approach to teaching in clinical disciplines with immersive technology

Cardiff University has been on a journey to develop a virtual hospital for Wales. The immersive online platform was developed in partnership with several Welsh universities, NHS Wales health boards, and Virtustech – a VR company founded by Cardiff University alumni.

Why West College Scotland’s digital strategy prioritises staff development and inclusion

When West College Scotland embarked on their digital transformation journey in 2019, Angela Pignatelli, assistant principal, became the college lead, creating and chairing the digital strategy group which was to spearhead inclusive and effective digital practices throughout the organisation.

Bangor University: A data-driven and collaborative digital transformation journey

By taking a data-driven and collaborative approach Bangor University has been listening and engaging effectively with their staff and students to understand the challenges they face and to provide the right support that will enable them to thrive in a digital world.

Digital leadership at PRP Training

After the pandemic caused a sudden shift to online learning, Clare Barley took the opportunity to reflect on how she was using digital in her leadership role.

How a low carbon house conversion is set to become Bridgend College’s newest teaching space

Like many institutions across the education sector, Bridgend College has carbon net zero firmly on the agenda. The team has recently embarked on a project to retrofit a property on the Pencoed campus into a low carbon demonstration house.

Digital by design: how Belfast Met are driving digital transformation

With an ambitious strategic plan for 2021-24, Belfast Met has digital at its forefront. One of five core objectives is ‘digital by design’, but what does it mean and what is the college doing to meet this goal?

The Engineering Professors’ Council: Using data to inform and shape the future of engineering

The Engineering Professors’ Council (EPC) has developed a new data explorer which is helping to shape the future of engineering.

From the farm to the sports hall: ‘Discover Digital’ at Barnsley College puts digital learning in every space

When Barnsley College embarked on a digital transformation project that would span all service areas across all 14 campuses, Rachel James, assistant principal for teaching and learning, had a challenge ahead of her.

Fun, engaging, and interactive: how VR changed open evenings at Gower College Swansea

Gower College Swansea's Open Class platform offers 3D classrooms and virtual reality tours, allowing prospective students to virtually visit before attending an open evening, calming any anxiety about a new and potentially overwhelming setting.

Adaptability, data and digital poverty: digital assessment at Brunel University

Migrating pen-and-paper exams to online assessments is not without its challenges, for Brunel University London the data generated from their digital assessments provided insight that could be used to monitor equality and inform the response to student welfare issues.

“A glimpse into the future”: Basingstoke College of Technology’s pioneering LaunchSpace facility

Basingstoke College of Technology (BCoT) is bringing the future of digital learning to the present with LaunchSpace, a set of digitally enhanced suites which enable practical, skills-based learning and cater to industry demands.

How the City of Liverpool College is using technology to provide financial support to those students most in need

By introducing a fully integrated digital platform to manage their student finance applications, the City of Liverpool College have been able to work more sustainably and focus their efforts on providing targeted and quality support to those students most in need of their help.

The Turing Way forward

Our community champion programme recognises and celebrates inspiring people from further and higher education and research, who go above and beyond to collaborate and share experiences for the good of all.

Sparking a passion for digital skills: a tried and tested approach to developing staff confidence

The digital experience insights survey 2020/21 revealed that only 6% of higher education teaching staff felt they received recognition for developing their digital skills. But with more being expected from educators, are they getting the support and recognition they need?

Creating library vibes for students studying remotely

Study sessions in the university library are, for many, a fundamental part of the student experience. But what happens if you take the physical library out of the equation?

Askham Bryan’s roadmap to the future of digital learning

Through investment and a ‘teachers helping teachers’ philosophy, Askham Bryan College plan to build a support community to improve the digital skills of their students and achieve their digital vision for 2030.

Meet Marcel, the library chatbot supporting hard-to-reach learners

Work-based, distance and vocational learners often find it challenging to access library staff expertise and resources. Gower College Swansea’s library-specific chatbot aims to level up the playing field, bringing their resources out to the learners.

How Kirklees Council is tackling digital inequality through eduroam

Terence Hudson, head of technology at Kirklees Council, explains how taking up Jisc’s offer of rolling out eduroam with govroam is helping the council tackle digital inclusivity and creating a long-term positive impact for the whole region.

Cardiff Metropolitan University: emerging stronger from cyber-attack

When Sean Cullinan, head of information services at Cardiff Metropolitan University, realised the university’s systems were under attack, it kickstarted a powerful working relationship with Jisc that transformed security.

Digital transformation at Rochford District Council

Rochford District Council in Essex needed to streamline its infrastructure to be in a position to deliver better services to its residents. Thanks to its partnership with Jisc, the council is now working more efficiently and securely, and saving money too.

From USB sticks to terabyte data transfers: how astronomers are making the universe feel smaller

While astronomers tackle the largest research area possible – the entire universe beyond the Earth’s atmosphere – just a few telescopes around the world provide much of the data they study to uncover the secrets of the universe’s celestial objects.

West of England Institute of Technology: Building connections to beat the skills gap

The launch of the West of England Institute for Technology (WEIoT) marked a giant leap forward in the efforts regionally and nationally to close the skills gaps in key STEM areas.

Powering discoveries that benefit humankind

Understanding the causes of disease is crucial to identifying potential treatments and, ultimately, finding cures.